Strategic Approaches to Modern Time-Series Management

Selecting the right infrastructure for high-velocity data requires a deep understanding of how specialized systems handle massive ingestion rates and long-term storage efficiency. When evaluating high-performance setups, understanding timescaledb tsdb compaction is essential for maintaining query speed while reducing disk footprints. As organizations move toward industrial IoT and real-time monitoring, the ability to compress data without sacrificing accessibility has become a hallmark of leading time-series solutions.

The Evolution of Specialized Data Storage

Time-series data is unique because it is almost always immutable and arrives in chronological order. Unlike traditional transactional data, which may see frequent updates to existing rows, time-series workloads are append-heavy. This distinction has led to the rise of purpose-built databases designed to handle the specific write-and-read patterns of metrics, events, and traces.

By treating time as a first-class citizen, these systems optimize how data is partitioned across memory and disk. This architectural shift allows for massive scalability, ensuring that as your data grows from millions to billions of data points, the system remains responsive and reliable. Dedicated engines ensure that ingestion remains smooth even as the total volume of stored information expands.

Key Advantages of Time-Series Engines

The primary benefit of a dedicated engine is its ability to handle high cardinality—situations where you have thousands or even millions of unique data streams. Specialized engines use columnar storage formats that are far more efficient for analytical queries than row-based alternatives. This approach allows the system to read only the specific columns needed for a calculation, drastically reducing I/O overhead.

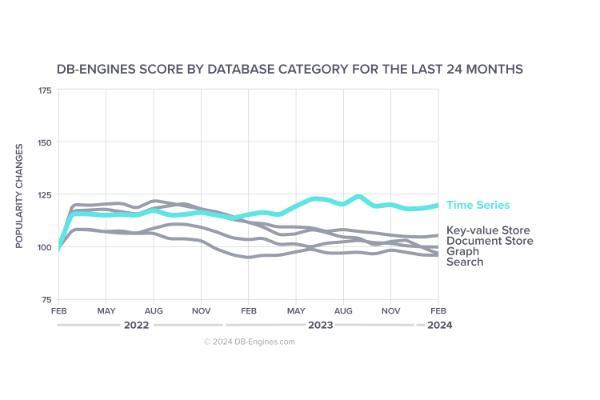

Furthermore, these systems often include built-in functions for time-windowing, downsampling, and gap-filling. When performing an open source time series database comparison, it becomes evident that the efficiency gained here directly translates to lower hardware costs and reduced operational overhead for long-term industrial projects. By offloading complex logic to the database layer, developers can focus on building insightful dashboards rather than managing data structures.

Data Retention and Lifecycle Management

One of the most powerful features of modern time-series platforms is automated lifecycle management. Instead of manually deleting old records, users can define policies that automatically move older data to cheaper storage tiers or summarize it into aggregate views. This keeps the database lean and ensures that the most relevant information is always the most accessible.

This automated tiering ensures that the most recent, high-precision data is kept in high-performance storage for immediate analysis, while historical trends remain accessible for long-term auditing and forecasting. This balance is crucial for maintaining a cost-effective data strategy in the face of exponential data growth common in modern digital environments.

Performance Benchmarking in Industrial Contexts

In industrial settings, the stakes for data integrity and latency are incredibly high. Databases must be able to ingest data from thousands of sensors every millisecond without dropping a single point. Benchmarks in these environments often focus on ingest-at-scale, measuring how many millions of points per second a system can handle before latency begins to climb.

Modern engines achieve these numbers by using structures that optimize for sequential writes. By avoiding the overhead of traditional indexing during the write phase, these systems can sustain high performance even during peak traffic bursts. This reliability is why specialized time-series databases have become the standard for mission-critical infrastructure and real-time financial tracking.

Architecture Decisions for Modern Scaling

When deciding on a core architecture, the debate of tsdb vs rdbms highlights the fundamental trade-offs between general-purpose flexibility and specialized performance. While a relational database is excellent for complex joins and ACID-compliant transactions across diverse datasets, a time-series database is optimized specifically for the temporal dimension. This specialization allows for much higher ingestion rates and significantly better compression ratios for repetitive sensor data.

By utilizing a dedicated time-series engine, organizations can achieve superior query performance on time-range scans—tasks that might cause a traditional relational database to struggle as the table size increases. This makes specialized engines the preferred choice for telemetry, financial market data, and large-scale monitoring projects where time is the primary axis of analysis.

Optimizing Storage Efficiency with Advanced Encoding

Beyond simple compression, high-end time-series databases employ sophisticated encoding techniques. These methods take advantage of the fact that many time-series values change only slightly from one point to the next. By storing only the difference or "delta" between values, the system can achieve staggering reductions in storage requirements.

This not only saves money on disk space but also improves query performance, as the system needs to read fewer bytes from the disk to answer a request. Efficient encoding is a key reason why modern databases can store years of high-resolution data on relatively modest hardware configurations.

Integration with the Modern Data Stack

A database does not exist in a vacuum. The most successful implementations are those that integrate seamlessly with tools like Grafana for visualization, Spark for heavy-duty processing, and various message brokers. Having a database that speaks the language of the broader ecosystem is just as important as its internal speed.

Modern time-series solutions often provide native connectors or support for standard protocols like SQL or specialized query languages. This ease of integration ensures that data scientists and analysts can use the tools they are already familiar with to extract value from the stored time-series data, bridging the gap between raw ingestion and actionable business intelligence.

The Future of Temporal Data Analysis

As we look toward the future, the integration of machine learning directly into the database layer is becoming a reality. Future-proof time-series engines are beginning to incorporate anomaly detection and predictive modeling as native functions. This reduces the need to move data to external platforms for basic analysis, further increasing efficiency.

This evolution will allow systems to not just store what happened in the past, but to actively help users identify trends and patterns. By combining efficient storage, high-speed ingestion, and built-in intelligence, the next generation of time-series databases will continue to be the backbone of the data-driven enterprise.