Unlocking AI Agent Observability with AgenticAnts' Real-Time Insights

As enterprises deploy increasingly sophisticated AI agents capable of autonomous decision-making and multi-step reasoning, a new challenge has emerged from the shadows of the machine room: observability. Traditional monitoring tools designed for static software applications are utterly unequipped to trace the winding, sometimes unpredictable paths of autonomous agents. When an AI agent makes a decision that impacts a customer order or generates a report that influences strategy, knowing what happened is no longer enough. Organizations need to understand why it happened, what alternatives were considered, and how the agent arrived at its conclusion. AgenticAnts has stepped into this visibility vacuum with a real-time observability platform that illuminates the inner workings of AI agents, transforming them from mysterious black boxes into transparent, accountable digital workers.

Why Traditional Monitoring Fails Autonomous Agents

The monitoring tools that enterprises have relied on for decades were built for deterministic systems. A traditional application follows predictable code paths; if something goes wrong, you can trace the execution step by step. AI agents, by contrast, are probabilistic and context-dependent. The same prompt might lead to different reasoning paths depending on the agent's internal state, the conversation history, or even the time of day. Traditional logs capture outputs but miss the cognitive journey. AgenticAnts recognized this fundamental mismatch early, building an observability layer designed specifically for the non-deterministic nature of Agentic Observability AI. Rather than asking what the agent did, their platform illuminates how the agent thought, providing visibility into the reasoning processes that generate outcomes.

The AgenticAnts Observability Architecture

At the core of AgenticAnts' solution is what the company calls "cognitive tracing"—a technical architecture that captures the decision-making process of AI agents without interfering with their performance. When an agent receives a task, the platform records not just the final response but every intermediate step: which tools were considered, why certain options were rejected, what information was retrieved from memory, and how confidence scores evolved during reasoning. This trace is captured in real time and presented through an intuitive interface that allows developers and business users alike to follow the agent's thought process. The architecture is designed to be lightweight, adding minimal latency while providing maximum visibility, a balance that previous monitoring solutions failed to achieve.

Real-Time Visibility into Multi-Step Reasoning

Modern AI agents rarely answer questions directly. They break complex tasks into sequences of actions, sometimes looping back to refine their approach or pivoting when initial strategies fail. Following these multi-step journeys manually is like tracking a single conversation across a crowded party. AgenticAnts provides real-time visibility into this complexity through interactive trace visualizations that show each step, each decision point, and each tool invocation. When an agent spends thirty seconds researching before answering, the platform reveals what it was doing during that time. When it changes its mind midway through a response, the observability layer captures the pivot. This real-time insight transforms debugging from guesswork into science, allowing teams to understand agent behavior as it unfolds.

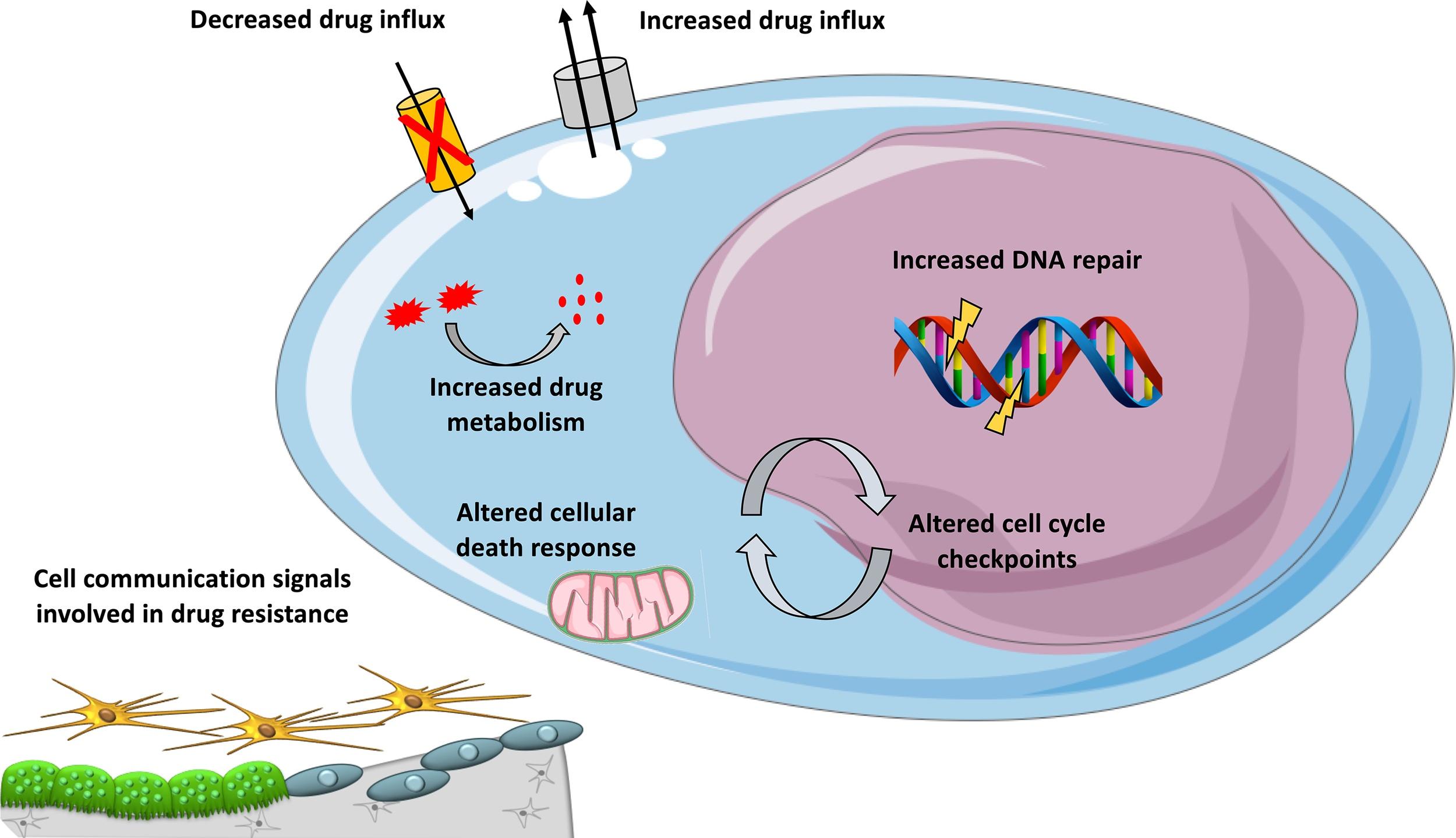

Understanding Tool Selection and Usage

One of the most critical aspects of agent observability is visibility into tool usage. Modern agents have access to dozens of tools—search engines, databases, calculators, APIs—and their effectiveness depends on choosing the right tool for each subtask. AgenticAnts captures every tool invocation with full context: which tool was selected, what input was provided, what output was received, and most importantly, why that tool was chosen over alternatives. When an agent incorrectly uses a calculator instead of a database, the observability layer reveals the reasoning error. When it struggles to parse tool outputs, the platform shows exactly where the breakdown occurred. This tool-centric visibility helps organizations optimize their agent architectures and improve tool selection over time.

Performance Metrics That Actually Matter

Observability isn't just about understanding individual agent decisions; it's about measuring system health across deployments. AgenticAnts aggregates observability data into meaningful performance metrics that reflect the unique characteristics of agentic AI. Task completion rates show how often agents successfully finish assigned work. Reasoning efficiency measures the number of steps required to reach conclusions. Tool accuracy tracks how often agents select and use tools correctly. Confidence calibration reveals whether agent certainty correlates with actual correctness. These metrics, updated in real time and available through customizable dashboards, give organizations a true picture of how their AI workforces are performing, enabling data-driven decisions about improvements and investments.

Debugging and Optimization Through Trace Analysis

When agents misbehave, understanding why is the first step toward improvement. AgenticAnts transforms debugging from a frustrating black-box exercise into a structured investigation. Developers can search for problematic interactions, examine complete cognitive traces, and identify exactly where reasoning went wrong. Perhaps the agent misunderstood the user's intent, or maybe it selected an inappropriate tool, or perhaps it lacked necessary context in its memory. Each trace provides the raw material for optimization, whether through prompt refinement, tool improvements, or memory enhancements. This debug-first approach means organizations don't just observe problems; they fix them, continuously improving agent performance through insight-driven iteration.

Building Trust Through Transparency

Perhaps the most valuable outcome of robust observability is organizational trust. Stakeholders who are understandably nervous about autonomous systems making consequential decisions gain confidence when they can see inside the machine. AgenticAnts makes this transparency practical, providing business users with understandable explanations of agent reasoning alongside the technical details developers need. When a customer service agent makes a unusual refund decision, the observability layer shows exactly what policy it considered. When a research agent produces an unexpected conclusion, the platform reveals the sources it consulted. This transparency transforms AI agents from mysterious entities into accountable team members, building the trust necessary for widespread enterprise adoption. In an era where AI autonomy is expanding rapidly, AgenticAnts ensures that visibility keeps pace with capability, keeping humans firmly in the loop even as machines take on increasingly sophisticated work.